Arpana K. Patel Int. Journal of Engineering Research and Applications www.ijera.com

ISSN : 2248-9622, Vol. 5, Issue 3, ( Part -1) March 2015, pp.140-143

www.ijera.com 140|P a g e

Comparative Analysis of Hand Gesture Recognition Techniques

Arpana K. Patel*, Mr. Amit H. Rathod**

*(PG. Student, Department of CSE, Parul Institute of Engineering & Technology, Vadodara, India) ** (Assistant Professor, Department of CSE, Parul Institute of Engineering & Technology, Vadodara, India)

ABSTRACT

During past few years, human hand gesture for interaction with computing devices has continues to be active area of research. In this paper survey of hand gesture recognition is provided. Hand Gesture Recognition is contained three stages: Pre-processing, Feature Extraction or matching and Classification or recognition. Each stage contains different methods and techniques. In this paper define small description of different methods used for hand gesture recognition in existing system with comparative analysis of all method with its benefits and drawbacks are provided.

Keywords-Classification, Feature Extraction, Hand Gesture Recognition, Human computer Interaction (HCI), Image Pre-processing

I.

I

NTRODUCTIONImage Processing is a deal with Pictorial information for human interpretation and examine [1]. Gesture Recognition is in Computer science and language technology with goal of interpreting human gesture via method and algorithms. Gesture is basically use for Non-verbal communication. Adroit gesture can add to the impact of a speech Gesture Clarity your ideas or rein force them and should be well suited to the audience and occasion. Gestures are being used in HCI many since many years. Earlier, hardware based gesture recognition was more prevalent. User had to wear gloves helmet and other heavy apparatus. Sensor actuator and accelerometer were used for gesture recognition. But the whole process was difficult in real time environment. Hand Gesture can be sub divided in to two types static and Dynamic as shown in Figure 1.1.

Fig.1.1: Types of Gesture

In Static hand gesture shape of hand gesture is used to express meaning or feeling. Dynamic Hand Gestures are composed of series of hand movements for track motion of hand [2].Hand Gesture Recognition is topic in computer vision because of more application, such as HCI, sign language interpretation, and visual surveillance [3].

II.

H

ANDG

ESTURER

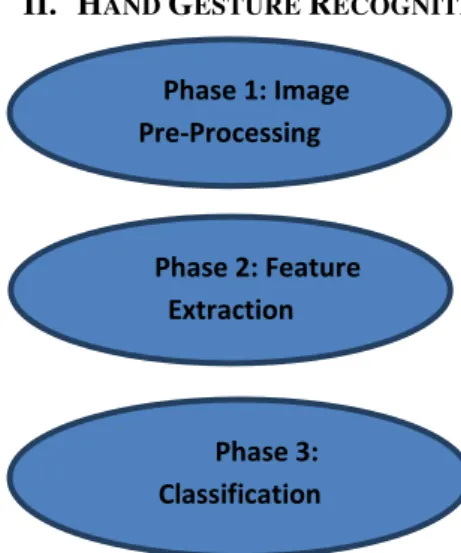

ECOGNITIONFig. 1.2: Three common stage of gesture recognition system

In earlier days hand gesture detection was done using mechanical device to obtain information of the hand gesture. The essential aim of building hand gesture recognition system is to create a natural interaction between human and computer where the recognized gestures can be used for controlling or conveying meaningful information. Generally there are three stages in most of the gesture recognition system. The tree stages may be enumerated as image pre-processing, feature extraction and classification or recognition shown figure 1.2 [4].

2.1 Image Pre-Processing

In preprocessing stage operation are applied to extract the hand gesture from its background and prepare the hand gesture image for feature extraction. Skin segmentation is the process of dividing an image into multiple parts. This is used to

Gesture

Static Gesture

Dynamic Gesture

Phase 1: Image Pre-Processing

Phase 2: Feature Extraction

Phase 3:

Classification

Arpana K. Patel Int. Journal of Engineering Research and Applications www.ijera.com

ISSN : 2248-9622, Vol. 5, Issue 3, ( Part -1) March 2015, pp.140-143

www.ijera.com 141|P a g e identify objects or other relevant information or data

in digital images. There are many different ways to perform image segmentation, including: RGB, HSI, YCbCr, CIE-Lab [5].

2.2 Feature Extraction

Good Pre-process leads to perfect features extraction process and the latter play an important role in a successful recognition process. Feature extraction is a complex problem and in which whole image or transformed image is taken as input. The goal of feature extraction is to find the most differentiate information in the recorded images. Feature extraction operates on two- dimensional image array but produces a feature vector [6].

1) Contour Detection

Contour detection in images is a fundamental problem in computer vision tasks. Contours are distinguished from edges as follows.

Contour tracking algorithm [7]:

Step 1: Scan the image from top to bottom and left to right to find first contour pixel marked.

Step 2: To scan clockwise direction searching pixel sequences.

Step 3: Scan clockwise until the next pixel valueXJ = 1.

Step 4: If XJ = 1, position of XJ ≠ (i1, j1) and

position of XJ≠ (i2, j2), Store position of XJ into x

and y arrays and set (i, j) = position of XJ . When J >

3 set (i1, j1) = position of XJ−1 and (i2, j2) = position of XJ−2.

Step 5: If step 4 become false then set x[J] = x[J−1],

y[J] = y[J − 1], (i, j) = position of XJ−1, (i1, j1) = position of XJ and (i2, j2) = position of XJ−2. Step 6:

Repeat steps 2 to 5 until position of XJ = position of X1.

By computing proposed contour tracking algorithm, the position of all contour pixel is stored into x and y arrays.

2) Histogram Based Feature

Many researches have been applied based the histogram, where the orientation histogram is used as a feature vector. It is indicate 2D vector contain the strengths and angles that present locally throughout the image [8]. The mean and standard deviation of orientation histogram about the x and y directions, correlation function, Cor (x, y) according as j shifts. The mean and standard deviation of orientation histogram about the x and y directions, correlation function, Cor (x, y) according as j shifts.

Cor(j)= 1 + 1 (1)

Where = ∗

(� �1 − � �2 )2+ (� �1 − � �2 )2(2)

N= ∗

(� �1 − � �2 )2+ (� �1 − � �2 )2 (3)

E() is the expectation operation and σ() is the

standard deviation operation. q1 and q2 are the patches of input images about each x-axis and y-axis. q1 and q2, are the patches of model images and A, B are constants. After correlation function calculate orientation histogram for each block calculated and classified.

3) Moments Invariant

Features associated with images are called invariant if they are not affected by certain changes regarding the object view such as translation, scaling, rotation and light conditions [7]. Hu proposed 7 famous invariant moments. It is used in many image classification approaches and not affected by translation, scale or rotation [9].

2.3 Classification or Recognition

Recognition process is used by feature extraction method and classification algorithm. In recognition stage, new feature vector can be classified after system is trained with enough. So, classification accuracy is good. Classification is done using popular methods like, k-Means, Mean shift, Hidden Markov Model, Principal Component analysis, Dynamic time warping.

1) k-Means Clustering:

The k-means problem is to determine k points called centers so as to minimize the clustering error, defined as the sum of the distances of all data points to their respective cluster centers [10]. The algorithm starts by randomly locating k clusters in spectral space. Each pixel in the input image group is then assigned to the nearest cluster center and the cluster center locations are moved to the average of their class values. This process is then repeated until a stopping condition is met. The stopping condition may either be a maximum number of iterations or a tolerance threshold which designates the smallest possible distance to move cluster centers before stopping the iterative process.

2) Mean Shift clustering

Arpana K. Patel Int. Journal of Engineering Research and Applications www.ijera.com

ISSN : 2248-9622, Vol. 5, Issue 3, ( Part -1) March 2015, pp.140-143

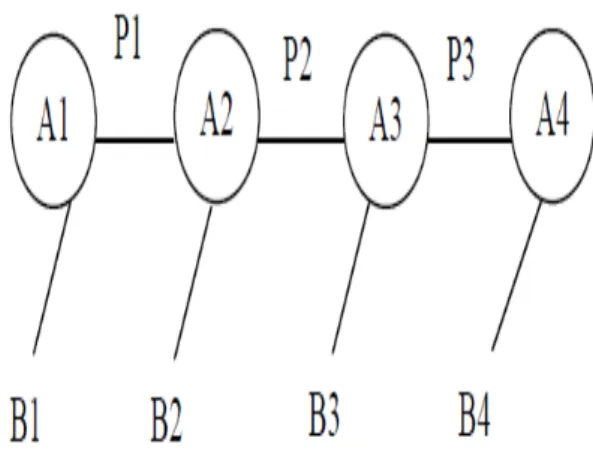

www.ijera.com 142|P a g e 3) Hidden Markov Model (HMM)

In describing hidden Markov models it is convenient first to consider Markov chains. Markov chains are simply finite-state automata in which each state transition arc has an associated probability value; the probability values of the arcs leaving a single state sum to one. Markov chains impose the restriction on the finite-state automaton that a state can have only one transition arc with a given output; a restriction that makes Markov chains deterministic. HMM is defined as a set of states of which one state is the initial state, a set of output symbols, and a set of state transitions. A HMM can be shown as Figure1.2 [2]. Set of hand positions in each state A1 to Ai represent the input states. The probability of transferring from one state to another state Pab, Pbarepresent the state transitions. The one specific posture or one gesture B1 to Bi represents the corresponding outputs. So the database of HMM has many samples per single gesture, the relation between the number of samples and the accuracy is directly proportional, and the speed is inversely proportional.

Fig. 1.3 HMM

4) Principal Component Analysis (PCA)

PCA based approaches normally contain two stages: training and testing. In the training stage, an Eigen spaces is established from the hand posture training images using PCA and the hand posture training images are mapped to the Eigen spaces for classification [11]. In testing stage, a hand gesture test image is projected to the Eigen spaces and classified by an appropriate classifier based on Euclidean distance. PCA lacks the detection ability.

5) Dynamic Time Wrapping (DTW)

DTW has been used to find the optimal alignment of two signals. The DTW algorithm

calculates the distance between each possible pair of points out of two signals and that signal is as defined as their associated feature values. Using these distances to calculate a cumulative distance matrix and find the least expensive path through this matrix and represent ideal warp of synchronization of the two signals that causes the feature distance between their synchronized points to be minimized. This process is done after the signals are normalized and smoothed. DTW is useful in various applications, such as speech recognition, data mining and movement recognition [12].

III. ANALYSIS

Hanning Z., et al. [13] presented hand gesture recognition system based on local orientation histogram feature distribution model. Skin color based segmentation algorithms were used to find a mask for the hand region, where the input RGB image converted into HSI color space. To compact features representation, k-means clustering has been applied.

Mohammad et. al.[14] For segmentation it is uses YCrCb color space for accurate skin detection. It is use encoded nonlinear RGB.. For contour detection find boundary region of detected hand then draw rectangular box around the contour and find center of hand. Classification is done using Hidden Markov Model (HMM). In this markov chain emits a sequence of observable outputs. It is model parameter

λ= (A, B, π) Where A is transition Matrix, B is

Observation Matrix and π is initial probability

distribution. In this markov chain emits a sequence of observable outputs.

Saad [15] proposed the process for detecting, understanding and translation sign language gesture to vocal language. There are two mode recording and translation mode. In recording mode, user adds gesture to dictionary. In translation mode, gesture is compared with gesture stored in dictionary. Dynamic Time Wrapping algorithm is used to compare gestures. It is provide 91% accuracy. It is not suitable for finger movement.

Arpana K. Patel Int. Journal of Engineering Research and Applications www.ijera.com

ISSN : 2248-9622, Vol. 5, Issue 3, ( Part -1) March 2015, pp.140-143

www.ijera.com 143|P a g e Table 1 Comparison of Existing Methods

Techniques Advantages Limitation

k- Means Produce tighter clusters.

Prediction of k difficult for fixed number of cluster Mean shift

clustering

Cluster assumed work on single parameter. Robust

Ambiguity in optimal parameter selection

Hidden Markov Model

Easily extended to deal with strong TC tasks. Easy to understand

Long assumptions about data. Huge number of parameter need.

Principal Component Analysis

Achieve satisfactory real time

performance.

Lack of diction ability

Dynamic time warping

Reliable time alignment robust to noise

Complexity is quadratic,distance metric needs to be defined.

IV.

C

ONCLUSIONRecently, Gesture recognition is very active area of research. Hand gesture recognition is often sensitive to poor resolution, drastic illumination conditions, complex background, cluttered background, multiple hand gesture detect and occlusion among other prevalent problems in the hand gesture recognition system. In this paper various method for each stage of Hand Gesture Recognition system used. In pre-processing stage, perform sequence of steps like segmentation, filter and edge detection which is used different method and algorithm to detect hand region and filter noise. In Feature extraction stage most commonly used contour tracking algorithm, moment invariant and histogram orientation gradient are used. For classification HMM, Clustering, PCA and DTW is used. Comparative analysis of all methods is discussed in this paper.

R

EFERENCES[1] Rafael C. Gonzalez, RichandE.Woods , Digital Imag Processing, 3rd edition, 2009. [2] Siddarth S. Rautary; AnupamAgarwal,

“Vision based hand gesture recognition for human computer interaction: a survey”, Springer Science 06-nov-2012

[3] Lingchen Chen,Feng Wang, Hui Deng,

KaifanJi “A Survey on Hand Gesture Recognition”, International conference on

computer sciences and applications, IEEE, pg. 313-316, 2013.

[4] Siddharth S. Rautaray; AnupamAgrawal,

“Real time hand gesture recognition system for dynamic application”, International Journal of UbiComp (IJU), Vol.3, No.1, January 2012.

[5] Son Lam Phung; AbdesselamBouzerdoum; Douglas Chai, “Skin Segmentation Using Color Pixel Classification: Analysis and Comparison”, IEEE Transactions On Pattern Analysis And Machine Intelligence, Vol. 27, No. 1, January 2005.

[6] HaithamHasan, S. Abdul-Kareem, “Static hand gesture recognition using neural networks”, springer, pg.147-181, 2012. [7] Dipak K. Ghosh, Samit Ari, “A Static Hand

Gesture Recognition Algorithm Using K-Means Based Radial Basis Functional Neural Network”, IEEE, Dec-Jan. 2011 . [8] Hyung-Ji Lee, Jae-Ho Chung, “Hand

Gesture Recognition using Orientation Histogram”, IEEE, pg. 1355-1358,1999. [9] Shaui Yang, Parashan, Peter, “Hand

Gesture Recognition: An Overview”, IEEE, pg. 63-69, 2013.

[10] RafiqulZ.Khan, Noor A. Ibraheem, “Hand Gesture Recognition : A Literature Review”, International general of Artificial Intelligence and Application, Vol. 3, No. 4, pg. 162-174, July 2012.

[11] Naseer H. Dardas; Emil M. Periu, “Hand Gesture Detection and Recognition Using Principal Component Analysis”, IEEE, Vol. 11, No.1, 2011

[12] Haitham S. Badi, Sabah Hussein, “Hand Posture and Gesture Recognition Technology”, Springer, pg. 1-8, 2014. [13] Hanning Zhou, Dennis J. Lin and Thomas

S. Huang, “Static Hand Gesture Recognition based on Local Orientation Histogram Feature Distribution Model”, Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops ,2004. [14] Mohammad I. Khan, Sheikh Md. Ashan,

Razib Chandra, “Recognition of Hand Gesture Recognition Using Hidden Markov Model”, IEEE, pg. 150-154, 2012.

[15] Saad, Majid, Bilal, Salman, Zahid, Ghulam,

“Dynamic Time Wrapping based Gesture Recognition”, IEEE, pg.205-210, 2014. [16] Ayan, Pragya, Rohit, “Information Measure