ORIENTAÇÃO

Hugo David Jesus Vieira

MESTRADO EM ENGENHARIA INFORMÁTICA

Touch

‘

n’ Sketch: Pen and Fingers on a Multi-Touch

Sketch Application for Tablet PC’s

Resumo

Em muitas áreas criativas e técnicas, os profissionais fazem uso de esboços em papel

para desenvolver e expressar conceitos e modelos. O papel oferece um ambiente quase livre

de restrições, onde eles têm tanta liberdade para se expressar quanto necessitam. No entanto,

o papel tem algumas desvantagens, tais como o tamanho fixo e não ter a capacidade de

manipular o conteúdo (a não ser removê‐lo ou risca‐lo), mas que podem ser superadas através

da criação de sistemas que podem oferecer a mesma liberdade que o papel, mas nenhuma das

desvantagens e limitações. Só nos últimos anos e com o desenvolvimento de ecrãs sensíveis ao

toque que também têm a capacidade de interagir com uma caneta, é que a tecnologia tem‐se

tornado massivamente disponível que permite fazer exactamente isso.

Neste projecto foi criado um protótipo com o objectivo de encontrar um conjunto de

interacções mais úteis e utilizáveis, que são compostas de combinações de multi‐toque e

caneta. Como domínio de aplicação para o projecto foram seleccionadas as ferramentas

Computer Aided Software Engineering (CASE), uma vez que abordam uma disciplina sólida e

bem definida mas ainda com espaço suficiente para novos desenvolvimentos. Este foi o

resultado da pesquisa realizada para encontrar o domínio da aplicação, que envolveu a análise

de ferramentas de desenho de várias possíveis áreas e domínios.

Estudos de utilizador foram conduzidos utilizando Model Driven Inquiry (MDI) para ter

uma melhor compreensão das actividades humanas e dos conceitos envolvidos na criação de

sketches. Em seguida, o protótipo foi implementado, através do qual foi possível executar

avaliações de utilizador sobre os conceitos de interacção criados. Os resultados obtidos

validaram a maioria das interacções, em face de somente testes limitados serem possíveis no

momento. Os utilizadores tiveram mais problemas usando a caneta, no entanto o

reconhecimento de escrita e tinta foram muito eficazes e os utilizadores rapidamente

aprenderam as manipulações e os gestos da Natural User Interface (NUI).

Multi‐toque Tablet‐PC

HCI

NUI

HAM

Ferramenta CASE de Sketch

Reconhecimento de tinta digital

Abstract

In many creative and technical areas, professionals make use of paper sketches for

developing and expressing concepts and models. Paper offers an almost constraint free

environment where they have as much freedom to express themselves as they need. However,

paper does have some disadvantages, such as size and not being able to manipulate the

content (other than remove it or scratch it), which can be overcome by creating systems that

can offer the same freedom people have from paper but none of the disadvantages and

limitations. Only in recent years has the technology become massively available that allows

doing precisely that, with the development in touch‐sensitive screens that also have the ability

to interact with a stylus.

In this project a prototype was created with the objective of finding a set of the most

useful and usable interactions, which are composed of combinations of multi‐touch and pen.

The project selected Computer Aided Software Engineering (CASE) tools as its application

domain, because it addresses a solid and well‐defined discipline with still sufficient room for

new developments. This was the result from the area research conducted to find an

application domain, which involved analyzing sketching tools from several possible areas and

domains.

User studies were conducted using Model Driven Inquiry (MDI) to have a better

understanding of the human sketch creation activities and concepts devised. Then the

prototype was implemented, through which it was possible to execute user evaluations of the

interaction concepts created. Results validated most interactions, in the face of limited testing

only being possible at the time. Users had more problems using the pen, however handwriting

and ink recognition were very effective, and users quickly learned the manipulations and

gestures from the Natural User Interface (NUI).

Multi‐touch Tablet‐PC

HCI

NUI

HAM

Sketch CASE Tool

Digital Ink‐Recognition

Acknowledgements

Firstly I want to thank my supervisors Dr. Josef Petrus van Leeuwen and Dr. Leonel

Domingos Telo Nóbrega for depositing their confidence on me and for accepting to supervise

me in this project. Also, I want to thank them for their patience, availability and resilience

throughout the bumps in the project.

Secondly I would like to thank all my master’s colleagues and friends who always had a

helping hand when needed and that demonstrated interested and care for me and this project,

with a special thanks to Aquilino, Élvio and Juan.

To the users that participated on the user studies and user evaluations, for their

availability when needed and willingness to help and participate, here is a special thanks to

them, whom without this project would have not been possible.

Finally, but most important, I would like to especially thank my family which if not for all

their support, encouragement and sacrifice I would have not been able to realize my studies

and consequently this project.

Resum Palav Abstr Keyw Ackno Index Figur Table Acron 1 1.1 1.2 1.3 1.4 1.5

2 S

2.1

2.2

mo ... vras‐chave .... ract ... words ... owledgemen

x ... e list ... e list ... nyms ... Introduction

1 Motivatio

2 Problem

3 Contribut

4 Approach

5 Documen

State of the

1 The touc

2.1.1 Int

2.1.1.1

2.1.2 OC

2.1.3 Us

2.1.4 NU

2.1.4.1 2.1.4.2 2.1.4.3 2.1.4.4

2 Related W

2.2.1 CA

2.2.1.1 2.2.1.2 2.2.1.3 2.2.1.4 ... ... ... ...

nts ... ...

...

...

...

n ... on ...

and Objectiv

tion ... h ...

nt Organizati

art ...

h environme

terface type

Comparing N

CGM interact

sing the user

UI Guidelines

Instant expe

Cognitive lo

Progressive

Direct intera

Work and Do

AD, CASE and

The Sketchin

Recognizing

CASE tools ..

Conclusion .

... ... ... ... ... ... ... ... ... ... ...

ves ... ...

...

ion ... ...

ent ... NUI ... NUI vs. GUI a tion style ... s: Innate abi s ... ertise ... ad ... learning ... action ...

omain Resea

d Sketch tool

ng Paradigm

the Sketch .. ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

and CLI ... ...

ilities and lea ...

...

...

...

...

rch ... ls ...

... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

arned skills .. ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

... i

... ii

... iii

... iv

... v

... vi

... x

... xii

... xiii

... 1

... 1

... 1

... 2

... 2

... 2

... 5

... 5

... 5

... 6

... 7

... 9

... 10

... 11

... 11

... 11

... 12

... 13

... 13

... 14

... 15

... 16

2.3 2.4 2.5 3 3.1 3.2 3.3 3.4 4 4.1 4.2 4.3 4.4 4.5

5 T

5.1

2.2.2 Dig

2.2.2.1

2.2.3 Dig

2.2.3.1

2.2.4 To

2.2.4.1

2.2.4.2

2.2.5 Co

3 CASE too

2.3.1 Ex

2.3.2 Us

4 Technolo

2.4.1 Th

5 Summary

Problem Ana

1 Models ..

3.1.1 Co

3.1.2 Pa

3.1.3 Pe

2 Scenario

3 User stud

4 Requirem

3.4.1 Us

3.4.2 Int

Concept ...

1 Concepts

2 Affordan

3 Actions‐A

4 Task Des

5 Storyboa

The Prototyp

1 Hardware

5.1.1 Du

5.1.2 Sty

gital Docume

Conclusion .

gital Arts ...

Conclusion .

ouch and Mu

Multi‐touch

Touch & Wr

onclusion ...

ols ... isting tools .

ser Interface

ogy ...

he Tablet‐PC

y ... alysis ... ...

ontext ...

articipation ..

erformance ..

...

dies’ results . ments ...

ser Interface

teraction Tec

...

s ... ces ...

Affordances

criptions ... ard ... pe ... e ...

ual digitizer..

ylus (pen) ....

ents ... ...

...

...

lti‐Touch ...

interaction .

rite ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

chniques ...

...

...

...

Mapping ...

... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

... 17

... 19

... 19

... 19

... 20

... 20

... 22

... 22

... 23

... 25

... 30

... 31

... 33

... 35

... 37

... 37

... 38

... 40

... 41

... 43

... 44

... 45

... 46

... 47

... 49

... 49

... 49

... 50

... 51

... 60

... 63

... 63

... 63

5.3

5.4

5.5

6 V

6.1

6.2

5.2.1 Mi

5.2.1.1

5.2.2 Lib

3 Architect

5.3.1 Ov

5.3.2 Ev

5.3.2.1 5.3.2.2 5.3.2.3 5.3.2.4

5.3.3 Co

5.3.3.1 5.3.3.2 5.3.3.3

4 The Inter

5 Challenge

Validation ‐

1 First stag

6.1.1 Te

6.1.1.1

6.1.1.2

6.1.2 Te

6.1.2.1

6.1.2.2

6.1.3 Te

6.1.3.1

6.1.3.2

2 Second s

6.2.1 Te

6.2.1.1

6.2.1.2

6.2.2 Te

6.2.2.1

icrosoft Surf

Microsoft Su

braries and F

ture ...

verview ...

ents ... Touch ...

Diagram Ele

Ink States ...

Connection

omponents ..

Widget pane

Drawing Box

MainWindow

rface/Design

es and limita

User Tests a

ge user tests

est 1 ... Conclusions

Design decis

ests 2 and 3 .. Conclusions

Design decis

ests 4 and 5 .. Conclusions

Design decis

tage user tes

est 1 and Tes Conclusions

Design decis

ests 3 and 4 .. Conclusions

ace ...

urface Toolki

Frameworks

...

...

...

...

ment States

...

Geometry u

...

el ... x ... w ...

... ations ...

nd Evaluatio

...

...

...

sion ... ...

...

sion ... ...

...

sion ... sts ... t 2 ... ...

sion ... ...

...

...

it for Windo

used ... ...

...

...

...

s ... ...

pdate ... ... ... ... ... ... ...

on ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

ws Touch Be

... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

eta ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ...

... 64

... 65

... 66

... 66

... 66

... 71

... 71

... 72

... 73

... 74

... 75

... 76

... 76

... 78

... 79

... 80

... 83

... 83

... 83

... 83

... 83

... 84

... 84

... 84

... 84

... 84

... 85

... 85

... 85

... 85

... 86

... 86

6.3

7

7.1

Refer

Appe

6.2.2.2

6.2.3 Te

6.2.3.1

6.2.3.2

6.2.4 Te

6.2.4.1

6.2.4.2

3 Third stag

6.3.1 Co

Conclusion ..

1 Future w

rences ...

endices ...

Design decis

ests 5 and 6 .. Conclusions

Design decis

est 7 ... Conclusions

Design decis

ge user tests

onclusions ....

...

work ... ...

...

sion ... ...

...

sion ... ...

...

sion ... s ... ...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

...

... 87

... 87

... 87

... 88

... 88

... 88

... 88

... 88

... 89

... 91

... 92

... 95

FIGURE 1: GESTURES VS. MANIPULATIONS. (GEORGE, "TERMINOLOGY: THE DIFFERENCE BETWEEN A GESTURE AND A

MANIPULATION" AT RON GEORGE BLOG, 2009) ... 8

FIGURE 2: BLAKE'S MOTTO FOR NUI GUIDELINES. (BLAKE, NATUAL USER INTERFACES IN .NET, 2011) ... 10

FIGURE 3: TYPES OF DIRECTNESS ILLUSTRATION. (BLAKE, NATUAL USER INTERFACES IN .NET, 2011) ... 12

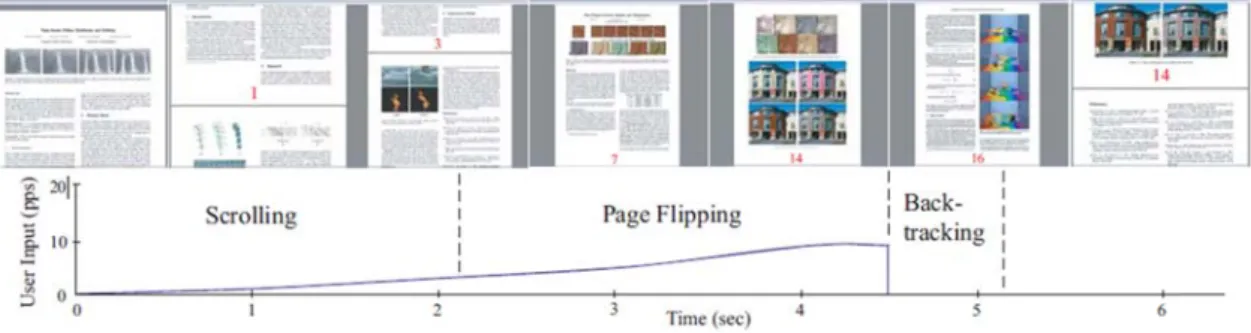

FIGURE 4: FLIPPER'S BEHAVIOUR. (SUN & GUIMBRETIÈRE, 2005) ... 18

FIGURE 5: MULTI‐FLICK SCROLLING TECHNIQUE. (ALIAKSEYEU, IRANI, LUCERO, & SUBRAMANIAN, 2008) ... 19

FIGURE 6: "USER BENDS A DRAWING BY TOUCHING IT WITH HIS FINGERS". (MOSCOVICH, 2006) ... 20

FIGURE 7: MULTI‐FINGER CURSOR TECHNIQUES: MULTIPLE CURSORS (LEFT) AND SINGLE CURSOR (RIGHT). (MOSCOVICH, 2006) ... 21

FIGURE 8: "AN ADJUSTABLE AREA CURSOR MAKES IT EASY TO SELECT ISOLATED TARGETS (LEFT) WHILE SEAMLESSLY ALLOWING FOR PRECISE SELECTION OF INDIVIDUAL TARGETS (RIGHT)". (MOSCOVICH, 2006) ... 21

FIGURE 9: MICROSOFT VISIO 2010, MAIN UML INTERFACE. ... 26

FIGURE 10: VISUAL PARADIGM, UML CLASS DIAGRAM. (VISUAL PARADIGM INTERNATIONAL, N.D.) ... 26

FIGURE 11: FREEHAND SHAPES IN VISUAL PARADIGM. (VISUAL PARADIGM INTERNATIONAL, 2009) ... 27

FIGURE 12: META SKETCH EDITOR, CLASS DIAGRAM FOR USER CENTERED DESIGN. ... 27

FIGURE 13: AGROUML GENERAL INTERFACE. (COLLABNET, INC., N.D.) ... 28

FIGURE 14: TAHUTI ‐ THE ORIGINAL USER STROKES VIEW (LEFT); THE INTERPRETED VIEW (RIGHT). (HAMMOND, GAJOS, DAVIS, & SHROBE, 2002) ... 29

FIGURE 15: SUMLOW’S VARIOUS RECOGNISED UML CONSTRUCTS ‐ SKETCH VIEW (LEFT) AND DIAGRAM VIEW (RIGHT). (CHEN, GRUNDY, & HOSKING, 2003) ... 30

FIGURE 16: COMMON INTERFACE WIREFRAME FOR TRADITIONAL CASE TOOLS (LEFT) AND FOR SKETCH BASED (RIGHT). ... 30

FIGURE 17: HEROT’S AND WEINZAPFEL’S ONE‐POINT TOUCH INPUT OF VECTOR INFORMATION, FORCE AND TORQUE ILLUSTRATION. (BUXTON, "MULTI‐TOUCH SYSTEMS THAT I HAVE KNOWN AND LOVED" AT BILLBUXTON.COM, 2007) ... 32

FIGURE 18: THE HP TOUCHSMART TM2, A CONVERTIBLE TABLET PC. (HEWLETT‐PACKARD DEVELOPMENT COMPANY, L.P., N.D.) ... 33

FIGURE 19: MICROSOFT’S COURIER BOOKLET. (PAPERBOY, 2009) ... 33

FIGURE 20: DYNABOOK CONCEPTUAL SKETCH. (HOLWERDA, "A SHORT HISTORY OF THE TABLET COMPUTER" AT OSNEWS, 2010) ... 34

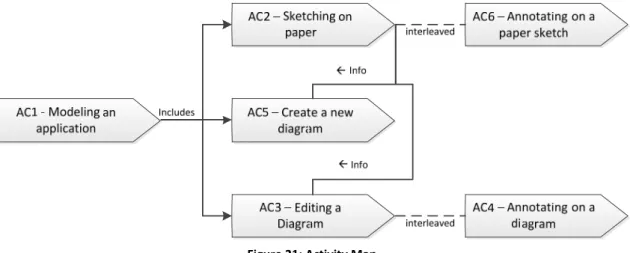

FIGURE 21: ACTIVITY MAP. ... 38

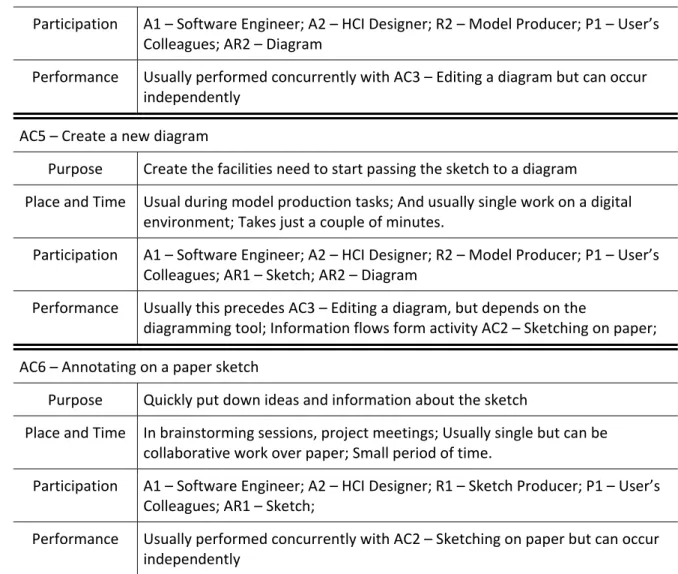

FIGURE 22: PARTICIPATION MAP. ... 41

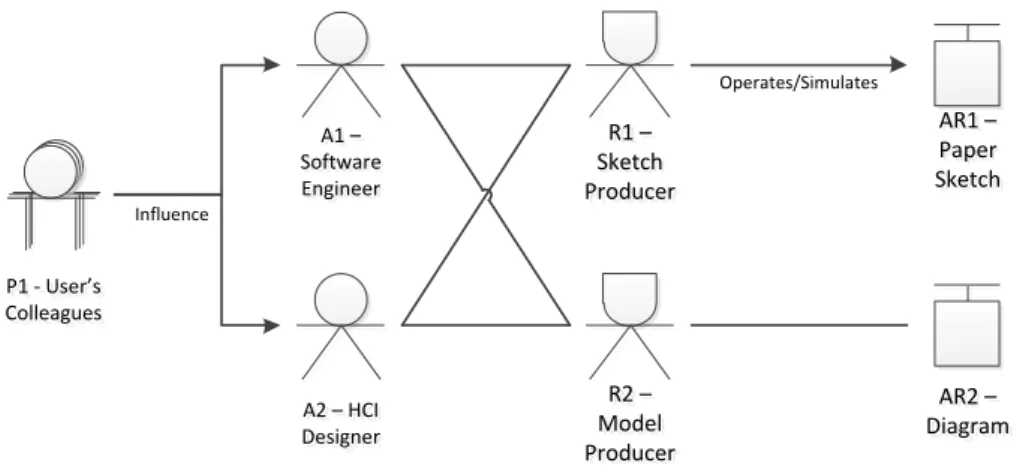

FIGURE 23: PERFORMANCE MAP. ... 43

FIGURE 24: STORYBOARD ‐ ADDING AN ELEMENT WITH THE FINGER. ... 60

FIGURE 25: STORYBOARD ‐ NAMING AN ELEMENT WITH THE PEN. ... 60

FIGURE 26: STORYBOARD ‐ SKETCHING A GROUP (LEFT) AND A CONNECTION (RIGHT) WITH THE PEN. ... 61

FIGURE 27: STORYBOARD ‐ REMOVING AN ELEMENT FORM A GROUP. ... 61

FIGURE 28: STORYBOARD ‐ HIGHLIGHTING AN ELEMENT WITH A PEN GESTURE. ... 61

FIGURE 29: EMR PEN DETECTION TECHNOLOGY. (WACOM, 2007) ... 63

FIGURE 30: HP TOUCHSMART TM2 DIGITIZER PEN. (HEWLETT‐PACKARD DEVELOPMENT COMPANY, L.P, 2007) ... 64

FIGURE 31: EMR PEN EQUIPPED WITH AN ERASER. (WACOM, 2007) ... 64

FIGURE 32: TOUCH DEVELOPMENT IN 2009 (TOP), IN 2010 Q1 (MIDDLE) AND THE TREND (BOTTOM). (FELDKAMP, 2009) ... 65

FIGURE 33: CLASS DIAGRAM’S DIVISION ‐ GROUP 1: DIAGRAM ELEMENTS; GROUP 2: CONNECTIONS; GROUP 3: INTERFACES; GROUP 4: DRAWING BOX; GROUP 5: WIDGET PANEL; GROUP 6: MAIN WINDOW. ... 67

FIGURE 35: SEQUENCE OF TOUCH EVENTS FROM WINDOWS 7 RAW TOUCH API; STARTING FROM THE LEFT WITH

TOUCHENTER AND ENDING WITH TOUCHLEAVE ON THE RIGHT. (BLAKE, NATUAL USER INTERFACES IN .NET, 2011) . 71

FIGURE 36: DIAGRAM ELEMENT’S STATE DIAGRAM. ... 73

FIGURE 37: INK STATE CHART. ... 74

FIGURE 38: CONNECTIONS GEOMETRY UPDATE’S DATA FLOW DIAGRAM (DFD)... 75

FIGURE 39: DRAWING BOX’S CANVASSES LAYER COMPOSITION. ... 76

FIGURE 40: REPRESENTATION OF THE ELEMENTS ON THE GESTURESINKCANVAS FOR THE DETECTION OF THE CONNECTION GESTURE AND. ... 76

FIGURE 41: REPRESENTATION OF THE ELEMENTS AND CONNECTIONS ON THE GESTURESINKCANVAS FOR THE CREATION OF A GROUP USING A PEN GESTURE. ... 77

FIGURE 42: REPRESENTATION OF THE ELEMENTS AND THE CONNECTIONS ON THE GESTURESINKCANVAS FOR REMOVING. .... 77

FIGURE 43: REPRESENTATION OF ELEMENTS AND CONNECTIONS ON THE GESTURESINKCANVAS AND SELECTION. ... 78

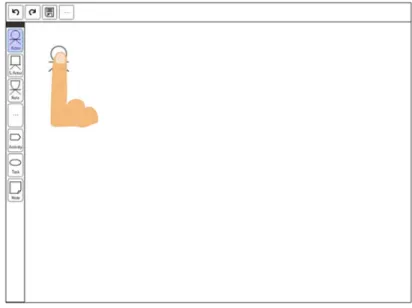

FIGURE 44: USER INTERFACE, MAIN WINDOW. ... 79

FIGURE 45: POSSIBLE STATES FOR THE ELEMENTS: DEFAULT ON THE LEFT, SELECTED ON THE CENTER AND HIGHLIGHTED ON THE RIGHT. ... 79

FIGURE 46: CONNECTION SELECTED AND ATTACHED TO ELEMENTS. ... 80

FIGURE 47: CONNECTION ATTACHING TO AN ELEMENT (LEFT) AND DETACHING (RIGHT). ... 80

TABLE 1: DIFFERENCES BETWEEN MANIPULATIONS AND GESTURES. (GEORGE, "TERMINOLOGY: THE DIFFERENCE BETWEEN A

GESTURE AND A MANIPULATION" AT RON GEORGE BLOG, 2009) ... 8

TABLE 2: ACTIVITY INVENTORY. ... 38

TABLE 3: ACTIVITY PROFILE CARDS ... 39

TABLE 4: PARTICIPATION INVENTORY ... 40

TABLE 5: TASK INVENTORY ... 41

TABLE 6: INTERACTION AFFORDANCES EXAMPLE – ONE FINGER TAP, PINCH AND ONE FINGER HOLD AND PEN DRAW. ... 50

TABLE 7: TASK INVENTORY ... 51

TABLE 8: TASK DESCRIPTION CARDS. ... 52

TABLE 9: RAW TOUCH API UIELEMENT CLASS' TOUCH EVENTS AND MOUSE EQUIVALENTS. (BLAKE, NATUAL USER INTERFACES IN .NET, 2011) ... 72

Acronyms

API ‐ Application Programing Interface

ATM ‐ Automatic Teller Machine

CAAD ‐ Computer Aided Architecture Design

CAD ‐ Computer Aided Design

CAP ‐ Canonical Abstract Prototype

CASE ‐ Computer Aided Software Engineering

CLI ‐ Command Line Interface

CMF ‐ Compound Multi‐Flick

CMOF ‐ Complete MOF

CTT ‐ Concurrent Task Tree

DFD ‐ Data Flow Diagram

DOF ‐ Degrees of Freedom

EMR ‐ Electro‐Magnetic Resonance

EUC ‐ Essential Use Case

FTIR ‐ Frustrated Total Internal Reflection

GUI ‐ Graphical User Interface

HAM ‐ Human Activity Modeling

HCI ‐ Human‐Computer Interaction

ISDOS ‐ Information System Design and Optimization System

LCD ‐ Liquid Crystal Display

MDD ‐ Model Driven Development

MDI ‐ Model Driven Inquiry

MFCT ‐ Multi‐Finger Cursor Techniques

MOF ‐ Meta‐Object Facility

NUI ‐ Natural User Interface

OCGM ‐ Objects, Containers, Gestures and Manipulations

PC ‐ Personal Computer

PSL/PSA ‐ Problem Statement Language/Problem Statement Analyzer

RSVP ‐ Rapid Serial Visual Presentation

SILK ‐ Sketching Interfaces Like Krazy

UIDST ‐ User Interface Design Sketching Tools

UML ‐ Unified Modeling Language

WIMP ‐ Windows, Icons, Menus and Pointers

WPF ‐ Windows Presentation Foundation

XMI ‐ XML Metadata Interchange

XML ‐ Extensible Markup Language

1

Introduction

Tablet‐PCs have been around for quite some time and with them the development of

sketch applications that take advantage in using the Stylus to sketch or hand‐write. There have

been developments in several areas such as architecture, graphic and industrial design, health

care, or even more personal uses such as simple note taking and personal journals.

Nevertheless one area where there have not been many developments is CASE tools. Some

sketching tools exist that allow users to create complete diagrams or even models, but in most

cases they are academic and usually not very stable versions.

It is a known fact that when developers and designers are starting a new project they

make use of paper sketches for their concepts, as it is an almost constraint free environment

where they have as much freedom to express their concepts as they need. However the paper

has some disadvantages, such as size and not being able to manipulate the content (other than

remove it or scratch it), which can be overcome by creating systems that can offer the same

freedom people have from paper but none of the disadvantages and limitations.

1.1

Motivation

With the booming advances in mobile technology there have been recent developments

on touch‐sensitive screens with dual input digitizers. These developments now open the

possibility of interacting with portable devices such as the tablet‐pc using a pen as well as

fingers.

This provides a new opportunity for more intuitive sketch applications which use a more

effective, efficient and more natural combination of pen and fingers input than just finger or

just pen interactions. This combination would possibly improve the user’s interaction with the

tablet.

1.2

Problem

and

Objectives

As implied in the previews section, the main objective of this project will revolve around

sketch applications and combinations of pen and finger interactions.

To find these interactions some sub‐goals where established, namely design the

interactions, build a prototype to be able to test these interactions, and prove their usability

and effectiveness through user testing.

So the main goal in this project “is to find the most useful and usable multi‐touch

(finger) interactions with pen‐enabled interactive screens” (van Lewen, 2010) that are efficient

and accurate in an application domain.

Such domain was not initially defined; the general concept of a sketch application was

already some idea of what the area could be: CAD tools, digital arts, digital documents, notes,

amongst many others in which sketching applications would equally be valid. This yielded the

need to research for a suitable project application domain, it then become the first objective

1.3

Contribution

The expected result is to create a prototype that enables the evaluation of a

combination of pen and finger interactions that contribute to improve the initial

conceptualization of a sketching CASE tool system.

In the course of this project there will be the need to research and devise the

requirements and users’ needs for the prototype, and from that a set of guidelines and user

needs for sketching CASE tools could be compiled, which could be reused on other projects

within the same domain area.

Also a set of natural user interactions for sketching CASE tools in small touch and pen

enabled environments, could be devised from the interactions created for the prototype.

1.4

Approach

The approach used was a User Centered Design (UCD) approach.

The fact that initially there was no concrete application domain led to the initial search

for that domain. This search for a suitable domain involved the review and analysis of existing

sketching tools in general and then a more focused analysis of the tools existing within the

candidate domains.

This is a very interaction focused project and with the approach used being UCD, there

was participation of users on many steps of the project, mainly on the user studies and the

evaluation stages of the development cycles of the prototype.

A user study was conducted using a Model Driven Inquiry (MDI), on the human activities

when creating sketches for a new system. The models were created and simultaneously the

initial conceptualizations were also created, then the observations and interviews were

executed.

An important part of the approach is the cyclic nature of the project development as the

results from the user studies influenced heavily on the conceptualizations when they were

reviewed afterwards; also as the interactions where conceptualized, integrated into the

prototype and then evaluated on user tests, and later adjustments were made to the

interactions or new interactions were devised and the cycle would start all over again.

1.5

Document

Organization

This document is divided into 7 chapters, and this structure is directly related to the

research approach taken to the project.

The first chapter is this one the Introduction where the motivation, problem and

objectives, contributions, approach and the structure of the document are stated.

Next the second chapter is the State of the Art, which focuses on describing the context

and the current state of the technology in which the project is based upon. It will cover the

main concepts and interaction style on the Touch environment, and will take a look on Sketch

Chapter 1 – Introduction

discussed earlier. Then some existing tools namely CASE tools will be analyzed. Also the type of

technology that allows this project to exist will be briefly introduced.

Third chapter is Problem Analysis where an inquiry is conducted using the MDI approach

mentioned in the previous section and involving the participation of users to have a better

understanding of what are the project requirements and user needs.

Then in the fourth chapter is the Concept of the prototype, where the initial concepts,

affordances and mapping of the interactions are presented. Also the tasks are described and a

storyboard presented.

Fifth chapter relates to the Prototype; and here the application itself will be presented.

The hardware and software used will be briefly covered, and then the architecture and the

design of the interface as described.

The Validation which is the sixth chapter follows. It is about the user testing and

evaluation stages, it will cover all three stages of tests that where performed during the

development of the prototype. First are the two initial design‐guiding evaluations stages and

then the final validation stage.

The seventh chapter, the Conclusion, summarizes what was presented and found

throughout the dissertation, some of the difficulties throughout the project. Finally some

perspectives on future work; which include future functionalities or extensions that could be

implemented.

There are a few appendixes that complement this document. Appendix 1 is about the

user studies so it is related to third chapter. Appendix 2 concerns the fourth chapter and

Appendix 3 has the material from the evaluations thus is related to the sixth chapter.

2

State

of

the

art

This chapter serves as an overview of the current state of the domain of the project.

Since this is a project with a very strong emphasis in HCI this chapter will cover the main

concepts and interaction style of the Touch environment. It will be a lot of material but it is all

important to provide a strong background and context of the area and what is involved in

designing for this new type of interface that is going to be used in this project.

After the initial concepts and aspects of the touch environment, to find the project’s

application domain, several Sketch applications are analyzed, followed by some related work

and some existing tools namely CASE tools as that will be the elected domain.

Finally a historic overview on the technology that allows this project to exist, focusing on

the type of technology used in the project.

2.1

The

touch

environment

This project is based on a recent interface type, the Natural User Interface (NUI). This

section presents that interface type and compares it to other interface types. Also mentions

some aspects of user computer interaction to which this new paradigm is supportive. And then

some guidelines that helped shape the design of the prototype.

2.1.1 InterfacetypeNUI

To better understand what a natural user interface is, here is an analysis of the role and

context of the NUI. As it is “the next generation of interfaces” and is possible to interact with

these interfaces “using many different input modalities, including multi‐touch, motion

tracking, voice, and stylus”, our input options are increased. However “NUI is a new way of

thinking about how we interact with computing devices and it is not just about the input”

(Blake, Natual User Interfaces in .NET, 2011).

The definition of the Natural User Interface as Blake (Blake, Natual User Interfaces in

.NET, 2011) defines it:

“A natural user interface is a user interface designed to reuse existing skills

for interacting directly with content”.

From this definition we can point out a few important aspects:

It says that the interfaces are designed which means that they are planned to have

appropriate interaction for the user and the content.

Because users are humans, they possess skills that where gained through their lives. NUIs

reuse those skills and make use of today’s technology to give users more natural

interfaces.

The content can be directly interacted with; this means that manipulating content directly

should be the primary method of interaction, however the interface can have controls

such as buttons when necessary, but they should be secondary to content direct

anoth

does

only o

detai 2 prese wher devic outpu

the O

mean as inp

they

input

type

skills

User

one w

assum shoul

NUI i

the p

applic tasks howe mous

devic

as NU

Bill Buxton

her definitio

An in

lifetime of

It is intere

not have to

one’s inborn

Further ah

l.

Com

2.1.1.1

Comparing

ented above

Graphical

re windows,

ce (such as a ut and text in

Objects, Cont

ns that objec

put, in the ne

The prime

use (keyboa

t device, whi

of device or

(Blake, Natu

GUI “uses

Interfaces i

would assum

mption wou

ld be the pri

Blake (Blak

s the more

place of exi

cations beca

”. Just as ha

ever GUIs w

se and keybo

For instanc

ce may be ne

UI even if it s

n (Buxton, "C n of NUI:

nterface is n

living in the

sting here t

o necessarily

n abilities but

ead in the c

mparingNU

g NUI to othe .

User Interfa

menus, and

mouse or a

nput using a

tainers, Gest

cts and cont

ext section w

difference

ard, mouse o

ile NUI is def

r interface t

ual User Inte

windows, m

n .NET, 201

me that it doe

ld not be co

mary interac

ke, Natual Us

capable, eas

ting GUIs o

ause GUI wa

appened with

will still be u oard, it is the

ce might be t

ecessary for

still uses com

CES 2010: N

natural if it " world."

hat not only

y learn new

t the skills th

chapter thes

UIvs.GUIa

er interface t

aces (GUIs)

icons are “a

touchpad) f

keyboard” (

tures and M

tainers are u

we will see O

between the

or touch). Al

fined in term

echnology, w

rfaces in .NE

menus, and i

1), contrasti

es not need c

orrect. This

ction method

ser Interface

sier to learn

r even CLIs

as more cap

h CLIs, NUIs

sed for thos

e most effect

the case of a

precise poin

mponents of

UI with Bill

"exploits skill

y is mention

skills, but it

hat are learn

e abilities an

ndCLI

types will ma

uses Windo

artificial inte

for input. Th

(Blake, Natu

Manipulations

used for outp

OCGM in mor

ese interface

so “CLI and

ms of the int

which intera

ET, 2011).

icons for pr

ing with tha

controls, how

fact only m

d and contro

es in .NET, 20

, and easier

s, “the worl

pable, easier

will be used

se specialize

tive way to a

a “mixed” use

nting tasks, a

f GUI. Since

Buxton" at M

ls that we h

ned that the

also clearly

ed througho

nd their asp

ake it easier

ws, Icons, M

rface elemen

he Command

al User Inter

s (OCGM) int

put, gesture

re detail.

e types is no

GUI are def

eraction sty

action style i

imary interf

t, NUI intera

wever, as sa

means direct

ols should be

011) says “G

to use tech

d migrated

r to learn, a

d on the mor

ed tasks, wh

accomplish th

er interface w

and yet the i NUI is not a

MSDN's Cha

ave acquired

skills are re

states these

out life.

ects will be

to understa

Menus and

nts” for outp

d Line Interfa

rfaces in .NET

teraction sty

s and manip

ot only in th

ined explicit

le”. Whereas

if focused o

ace element

acts directly

id in the pre manipulatio

e secondary t

UI is the sta

nology”. So

from CLI ap

nd easier to

re general pu

here despite

hem.

where the tr

nterface wo

bout input d

nnel 9, 2010

d through a

eused so the

e abilities ar

explored in

and the defin

Pointers (W

put and a po

ace (CLI) has

T, 2011). NU

yle; which ro

pulations are

he input mo

tly in terms o s NUI can us

n reusing ex

ts” (Blake, N

y with conte

evious sectio

on of the co

to the conte

le technolog

NUI will no

pplication to

o use in eve

urpose inter

e being limit

raditional po

uld be consi

devices, but

0) has

e user

re not

more

nitions

WIMP);

ointing

s “text

UIs use

oughly

e used

odality

of the

se any

xisting

Natual

nt. So n that

ontent

nt.

gy and

t take

o GUI

eryday

rfaces;

ted to

ointing

dered

Chapter 2 – State of the Art

interaction style, “it would be valid to design a NUI that used keyboard and mouse as long as

the interactions are natural” (Blake, Natual User Interfaces in .NET, 2011).

2.1.2 OCGMinteractionstyle

As said in the first chapter NUI uses OCGM as the interaction style, this section explores

its components.

Ron George (George, "Welcome to the OCGM Generation! Part 2" at Ron George Blog,

2009), defines this as “OCGM breaks down the basis of all future interfaces into two

categories, (…) one for Items and one for Actions. (…) Those are broken down into two

subcategories” each; Objects and Containers for the Items, and Manipulations and gestures for

the Actions.

Objects

Objects can be anything and take any shape on the interface, a picture, a textbox, an

icon or a button. An object basically represents something or an action of the system. Having

objects being so coverable, helps to free developers of thinking in Icons and Windows, and

explore other ideas.

Containers

A Container represents the bonds between objects. In an interface, it takes shape as the

relationship existing amongst objects, and it is not confined to the traditional form of a

physical box or window. For instance “they could be 5 balls circled around a larger ball which

forms a sort of a menu. They could be a simple tagging system”, that when activated could

“reveal the tagged objects and therefore reveal the container” (George, "Welcome to the

OCGM Generation! Part 2" at Ron George Blog, 2009).

One can consider manipulations and gestures as being objects, and in this sense they

could also be enveloped by containers. Furthermore Containers could be considered as objects

themselves. Therefore the interface is composed of objects and the relationships between

them, which are the key to manage the objects, and understanding these relationships is a

fundamental part of the design.

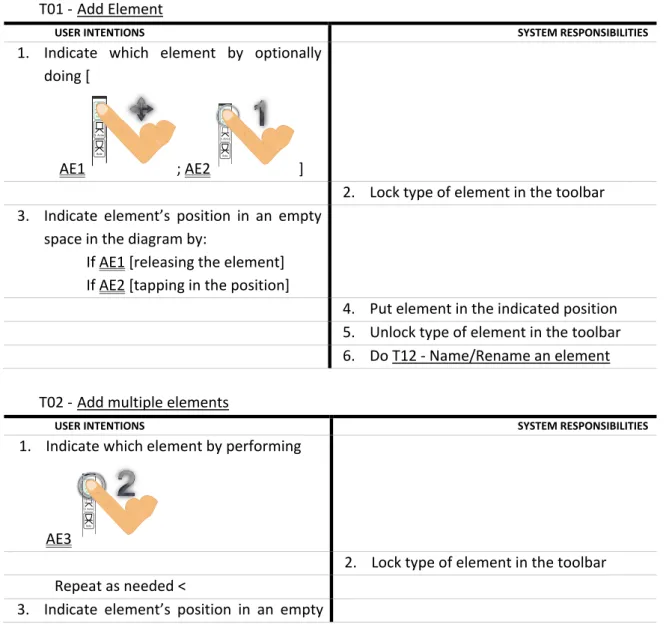

Manipulations andgestures

The main difference between Manipulations and Gestures is the nature of the

interaction, as represented in Figure 1.

Manipulations are the natural direct interactions mentioned before. They are easy to

perform, to understand and fairly intuitive. They are more appropriate to beginners and

medium users, and should be designed to be accidentally activated, thus they should be easily

discovered. A manipulation usually has an immediate reaction associated on the interface; this

gives feedback to the user making it easy for him to understand the result of his action.

Gestures are the opposite of Manipulations. They are indirect complex actions that “are

usually not intuitive (draw a ? for help), and are not geared towards the first user experience”

alway

to pe

OCGM left o shoul scree

press

that a

accom "Wel

to dis

Tab Conte locat React corre intera includ

Can b

state

and P

and Dialo Direc by intera your

or ex

Fi

Overall the

ys be geared

erform most

M Generatio

on a touch s

ld never be a

en.

Another int

s a button. U

are recogniz

mplished do

come to the

Ron describ

stinguish the

ble 1: Differenc

M

extual – th

ion(s) or on s

t immediat

elation in cau

action and

de visual affo

be single sta

s (see Bill B

Phrasing (Bu

the Des

ogues, 1986))

ct (could pos

way of

actions with

actions direc

perience in s

igure 1: Gestur between a

e interface

d towards a M

t of the com

on! Part 2" a

screen, whic

assigned to a

teresting exa

Usually one

zed at the en

oes a gestur

OCGM Gene

bes four diffe

em:

es between Ma

gestu

Manipulation

ey only ha

specific obje

tely – the

use and effe

the system

ordance)

te, but are u

Buxton’s pap

uxton, Chunk

ign of H

)

ssibly be co

augmenting

the reaction

ctly affect th

some way

res vs. Manipul gesture and a m

should be d

Manipulation

mmon daily t

at Ron Georg

ch is someth

a harmful or

ample is “to

has to “perf

nd of the seq

re get recog

eration! Part

erences betw

anipulations an

re and a manip

ns

ppen at sp

ect(s)

re is a d

ect between

m (this does

usually 3 or

per on Chun

king and Phr

Human‐Comp

nsidered ind

g your a

n of the syste

he system, ob

lations. (Georg

manipulation"

designed for

n and not to

tasks that th

ge Blog, 200

hing that co

unrecovera

start the sel

form a gestu

quence. Onl

gnized and t

t 2" at Ron G

ween Manip

nd Gestures. (G pulation" at Ro

ecific Not

syste

direct

your

s not

The

com this d

more nking rasing puter They direct actual

em) –

bject,

Indir direc is s com

e, "Terminolog at Ron George

r accidental

o a Gesture,

he user need

09). For exam

ould happen

ble action su

lf‐destruct o

ure, several

y then, afte

then the ac

George Blog,

ulations and

George, "Termi

on George Blog,

contextual –

em in locatio

system wait

plete to dec

does not inc

y contain at l

rect – they

ctly accordin

ymbolic in

mand, state

gy: the differen e Blog, 2009)

activations

as Manipula

ds (George,

mple swiping

by accident

uch as delete

n a ship,” on manipulatio r the order i

tion is perfo

2009)

d Gestures th

nology: the dif , 2009)

Gestures

– they can be

on and time

ts for the se

cide on how

lude visual a

east 2 states

do not af

ng to your ac

some way

ment, or stat

nce

and they s

ations can be

"Welcome t

g the hand t

t very frequ

e a file or cle

ne does not s ons in a seq

is maintaine

ormed.” (Ge

hat makes it

fference betwe

e anywhere

eries of eve

w to react (

affordance)

s

ffect the sy

ction. Your a

y that issu

te.

should

e used

to the to the

uently,

ar the

simply

uence

d and

eorge,

easier

een a

in the

nts to

again,

ystem

action

Chapter 2 – State of the Art

2.1.3 Usingtheusers:Innateabilitiesandlearnedskills

As mentioned above natural interfaces try to reuse skills and abilities the user possesses

already, in a way in NUI the users are used to improve the design and the usability. In this

section an overview of what are those skills and abilities is presented.

Humans “are all born with certain abilities”, for instance “the ability to detect changes in

our field of vision, perceive differences in textures and depth cues, and filter a noisy room to

focus on one voice” (Blake, Natual User Interfaces in .NET, 2011). Other abilities develop as

one matures, and examples of those are the abilities to eat, walk or talk.

The abilitytolearn

Humans also have a very important innate ability which is the ability to learn. However

despite skills and abilities being used to accomplish tasks, “learned skills are different than

innate abilities because we must choose to learn a skill, whereas abilities mature

automatically” (Blake, Natual User Interfaces in .NET, 2011).

Using this ability to learn humans build upon the simple innate abilities that we use to

learn skills and to accomplish simple tasks. By building these skills based on existing skills we

gradually learn more complex skills and can increasingly perform more complex tasks. Then

one can subdivide skills into two categories: simple skills and composite skills.

Simple skills

“Simple skills are learned skills that only depend upon innate abilities. This limits the

complexity of these skills, which also means simple skills are easy to learn, have a low cognitive

load, and can be reused and adapted for many tasks without much effort” (Blake, Natual User

Interfaces in .NET, 2011).

An example is tapping, because it is a natural human behavior which to master only

requires the innate ability of fine eye‐hand coordination (Blake, Natual User Interfaces in .NET,

2011). Tapping can be easily reused in interfaces, it could for instance be used to select an

element from the interface or to activate a button, just like it is used in everyday situations to

call attention to an object or to push a button on the radio of a car.

Compositeskills

“Composite skills are learned skills that depend upon other composites or skills, which

means they can enable you to perform complex, advanced tasks. It also means that relative to

simple skills, composite skills take more effort to learn, have a higher cognitive load, and are

specialized for a few tasks or a single task with limited reuse or adaptability”. (Blake, Natual

User Interfaces in .NET, 2011)

Going back to the previous example, tapping is a simple skill but clicking with the mouse

is not, they often accomplish the same result but the actions are different. The mouse click “is

a composite skill because it depends upon the skills of holding and moving a mouse and

acquiring a target with a mouse pointer. Using those two skills together requires a conceptual

a com

is not to acc

The c

we c

(Swel

of wo

subje subje many in th work

simpl when

2.

and s

thum guide proce Blake Interf four abilit above

Blake (Blak

mposite skill:

More e

we can

Higher

continu Specia

develo

For experie

t so straight

complish a s

Using skil

“Cognitive

concept refle

an do at th

ller, 1988) de

“Cognitive

orking memo

ect cannot be

ects into sub

y areas but to

e interactio

ing memory

In relation

le skills rath

n appropriate

1.4 NUIG

As in othe

styles, there

mb, patterns

e designers

ess, and NU

e (Blake,

faces in .NET

guidelines

ies, skills an e.

ke, Natual Us

effort to lea

n use it quick

r cognitive

uum, but the

lized with li

ping have no

enced users,

forward for

ignificant lev

ls increases

load is the

ects the fact

he same tim

eveloped thi

load theory

ory for germ

e changed, b

b‐areas.” (Bla

o interface d

n should be

for the load

to skills thi

her than com

e.

Guidelines

er interface

are some ru

or templat

s through

I is no excep

Natual

T, 2011) sug

based on

d cognitive

ser Interface

rn ‐ We mus

kly and accur

load ‐ Mou e mouse still

mited reuse

o other appli

using the m

less experie

vel of skill wi

scognitive l

measure of

t that our fix

me.” (Blake,

is theory on

states that

mane load, w

but the curre

ake, Natual U

design this m

e minimal, it

involved in

is means tha

mposite skills

types

les of es to

their

ption.

User

ggests

the

loads

F

es in .NET, 20

st invest a lo

rately.

use skills fa

demands a

e ‐ the mast

ications besi

mouse is easy

nced users.

ith the mous

load

f the working

xed working

Natual User

cognitive loa

extraneous

which is how

ent intrinsic l

User Interfac

means that th

t is more im

learning the

at the interf

s, this does

Figure 2: Blake'

011) indicate

ot of practice

all towards

measurable

er mouser s

des cursor‐b

y as they do

Practice and

se or with an

g memory u

memory cap

r Interfaces

ad.

load should

people lear

load can be

ces in .NET,

he cognitive

mportant to

e interface.

face should

not mean t

s motto for NU Interfaces i

es three reas

e time with t

the basic

amount of a

skills you spe

based interfa

not even thi

conscious e

ny other com

used while p

pacity limits

in .NET, 20

be minimize

n. The inher

managed by

2011). This

load created

leave suffic

be designed

hat the late

UI guidelines. (B in .NET, 2011)

sons as to wh

the mouse b

side of the

attention.

ent so much

aces.

ink about it,

effort are req

mposite skill.

performing a

how many t

11). John Sw

ed to leave p

rent difficult

y splitting co

can be appl

d by the skills

cient space i

d to make u

er cannot be

Blake, Natual U hy it is

before

e skill

h time

but it

quired

a task.

things

weller

plenty

ty of a

mplex

ied to

s used

in the

use of

e used

2

they

interf

up to

2011 2 intera have learn .NET, comp

the m

skills, perfo highe

the n

In the

priori skills

2

shoul progr in the

an in

skills. mean be a

only f

Ins

2.1.4.1

This guidel

are designin

face, they w

o speed.

Instant exp

):

Reusin

there c

domain compo

Reusin

that th

scenar

Cog

2.1.4.2

The cognit

actions to us

a low cogn

, even if som , 2011).

This guidel

posite skill al

mouse, an in

, still has a lo

ormed with

er cognitive

new simple s

e case of no

ity and not

requires less

Pro

2.1.4.3

In general

ld be provid

ressively lear

e way of exp

Usually adv

nterface simp

. When is no

ns that a com

part of the

for the cruci

stantexper

line says tha

ng interactio

ill be instant

perts can be

g domain sp

can be differ

n specific sk

osite skills.

g common h

hey have de

ios.

gnitiveload

tive load g

se innate abi

itive load an

me or all of

line could co

lready. For in

nterface whe

ower cognitiv

the mouse.

load than in

skills will no

ot being pos

the reuse o

s effort and

ogressivele

HCI design,

ded to the u

rn from novi

ert users do

vanced tasks

pler tasks u

ot possible t

mplex skill ha

core interfac

al tasks that

Chapte

tise

at designers

ons. In this w t experts as t

created by o

pecific skills –

rences in the

kills are not

human skills

eveloped jus

d

guideline sta

lities and sim

nd be very e

the skills ar

onflict with t

nstance, in t

ere most of t

ve load than

This is beca

nnate abilitie

longer weig

ssible to reu

f composite

is more natu

earning

, “a smooth

user. As NUI

ice to expert

ing advanced

s get broken

sually requir

to subdivide

as to be used

ce for begin

cannot be d

er 2 – State of

s should reu

way users wi

they do not

one of two w

– Targeted u

e skills withi

t easy to u

– Since hum

st by being

ates that o

mple skills”,

easy to use”

re complete

the instant e

he case of a

the interacti

n the same in

ause using t

es or simple

ght the bala

use simple s

e skills, as ov

ural” (Blake,

h learning pa

Is are no di t” but “at th

d tasks”. (Bla

down into s

re simple sk

an importa

d to achieve

ner users, a

done in any a

f the Art

se the skills ill not have

have to spen

ways (Blake, N

sers already

n the target

se in new

mans will be t

human is a

one “should

because “the

, and “the in

ly new” (Bla

expertise gu

user that ha

ions require

nterface whe

he mouse is

skills. And t

nce when th

kills, teachin

ver time “te

Natual User

ath from ba

fferent, they

e same time

ake, Natual U

impler subta

kills and adv

nt advanced

that task. In

nd complex

another way.

s users alrea

to learn new

nd much effo

Natual User I

have most o

t group popu

situations, b

the users, re

a better app

design the

e majority of

nterface will

ake, Natual U

ideline whe

as the comp

the user to

ere the intera

s a composi

the cognitive

he user has

ng simple sk

aching and

Interfaces in

asic tasks to

y should “en

e, the interfa

User Interfac

asks that are

vanced tasks

d task into s n this case th

tasks should

.

ady possess

w skills to us ort or time g

Interfaces in

of those skil

ulation. Also

because the

eusing simple

proach with

e most com

f the interfac

l be very qu

User Interfa

n users poss

osite skill of

learn new s

actions are m

te skill and

e load of lea

learned the

kills should b

using new s

n .NET, 2011

o advanced t

nable the us

ace should n

ces in .NET, 2

easily handl

s require co

simpler tasks

he task shou

d be limited,

when

se the

etting

.NET,

ls, but

o most

ey are

e skills

most

mmon

ce will uick to ces in

sess a

f using

simple

mostly

has a

arning

skills.

be the

simple

).

tasks”

ser to

ot get

2011)

led. In

mplex

s, that

ld not

2

direct 2011 conte

intera

of th

finge cente Proxi react botto Actio paral degre with

the c exper some

a bra

your

the s which

one d

the fi

the d

sheet to let

bette innat intera User intera exper finge Dir 2.1.4.4

This guide

t, high‐frequ

). To unders

extual intera

Direct Inte

There ar

actions, as il

he figure illu

r is touching

er of the

mity. The us

ting at the s

om is the th

on, where th

lel to the ac

ee‐of‐freedo

In the rea

objects, wh

ontent, and

rience doin

eone drags a

ainstorm se

hand move

same directio

h reaches th

drags the she

ingertips the

drag, and as

t is moving.

t the person

Humans na

er at it as th

te abilities h

acting indire

Interfaces in

actions.

High‐frequ

“Interfaces

rience. Each

r‐sized, perh

rectinterac

line states t

uency, and a

stand this gu

ctions.

eractions

e three

llustrated on

ustrates Spa

g over the o

figure th

ser is acting a

same time (

hird type of

he reaction

ction of the u

om.

l world one

ich in the in we have a n

ng it. For

sheet of pa

ssion for ex

ment at the

on, so it is a

e three type

eet over the

e texture of t

the fingers

Without the

know how a

aturally use h

hey grow an

humans alre

ectly with co

n .NET, 2011

uencyinter

s that allow

h individual

haps) interac

ction

the interface

ppropriate t

uideline one

types of

n Figure 3. T

tial Proximit

object itself.

ere is Tem

and the inter

(real time).

directness P

of the inter

user in at lea

e interacts d

nteraction w

atural senso

r instance

per over a ta

xample, it f

same time

a direct inter

es of directn

table, conce

the paper, th

and hand be

e visual feed

and what the

hands and fi

d develop. I

eady own,

ontent throu

1). Also they

action

many quick

interaction m

ctions are m

e should be

to the contex

e needs to k

direct

The top

ty; the

In the

mporal

rface is

At the

Parallel

rface is

ast one

directly

world is

ory‐rich

when

able, in

follows

and in

raction

ess. As

entrating on

he pressure

end one can

back there a

ey are manip

ingers to dire

Interactions

and “that a

ugh a mouse

y tend to be

k interaction

may have o

much easier

e designed “

xt“ (Blake, N

now what a

ly on the sen

of the exerte

n instantly pe

are already m

pulating.

ectly manipu

which make

are direct a

e and interf

smaller thu

ns provide a

nly a small

and quicker

Figure 3: Types

Natual U

“to use inte

Natual User I

re direct, hi

nses of the h

ed force and

erceive in w

multiple cues

ulate real ob

e use of the

also tend to

ace element

s they can b

more enga

effect, but

r to perform

s of Directness ser Interfaces i

eractions tha

nterfaces in

gh‐frequenc

hand, one fe

d the resistan

hich directio

s that are en

bjects and be

e simple skill

o be faster

ts” (Blake, N

be high‐freq

aging and re

the bite‐size

m. This allow

illustration. (B in .NET, 2011)

at are

.NET,

cy and

els on

nce of

on the

nough

ecome

ls and

than

Natual

uency

ealistic

ed (or

ws the